I’ve been testing the Writesonic AI Humanizer to make my AI-written content sound more natural, but I’m not sure if it’s actually improving readability or just rephrasing things superficially. I’m worried about SEO, originality, and whether Google might flag this kind of content. Can anyone share real experiences, pros and cons, or tips on using Writesonic’s AI Humanizer effectively for blogs and web copy?

Writesonic AI Humanizer Review

I spent some time messing with the Writesonic humanizer here:

https://cleverhumanizer.ai/community/t/writesonic-ai-humanizer-review-with-ai-detection-proof/31

Monthly pricing starts at 39 dollars if you want unlimited humanization. Out of everything I have tested so far, this one hit the top of the price chart fast, but the results did not match the cost at all.

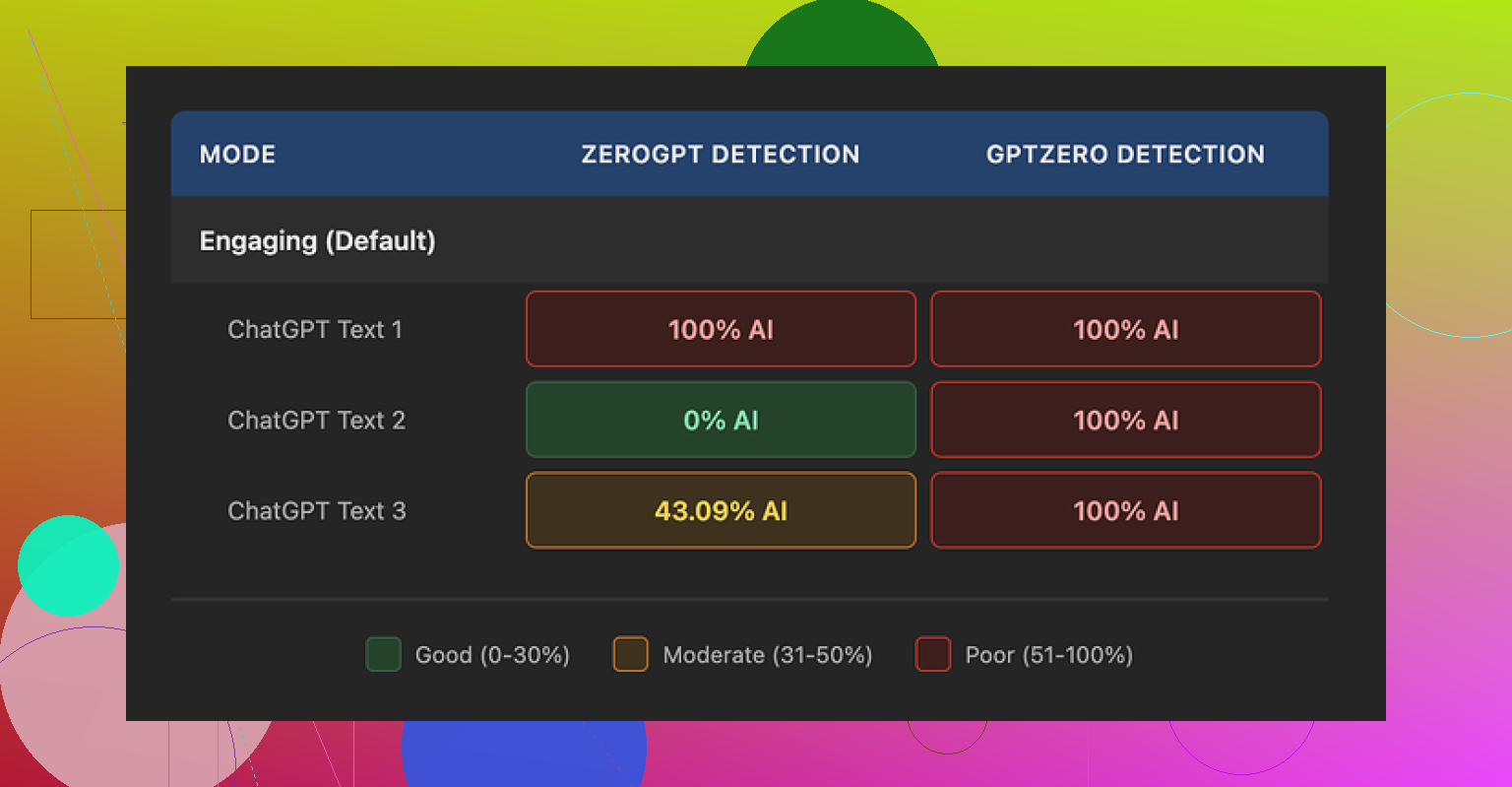

I ran three different humanized samples through GPTZero. All three came back as 100 percent AI generated. No borderline calls, no mixed verdicts, just straight AI across the board.

Then I threw the same three outputs into ZeroGPT and got this:

• Sample 1: 100 percent AI

• Sample 2: 0 percent AI

• Sample 3: 43 percent AI

So not only did it fail GPTZero, the ZeroGPT readings bounced all over the place. My guess is the humanizer is treated like a small extra inside their bigger SEO and content tool, and it feels like it gets that level of attention.

On writing quality, I would land around 5.5 out of 10 from what I saw.

The pattern was obvious after a few runs. It keeps shrinking sentences and swapping out any slightly technical word for something a bit too simple. After a while, the text looks like it is meant for early middle school.

Stuff like:

• “droughts” turns into “long dry spells”

• “carbon capture” turns into “grabbing carbon from the air”

• “rising sea levels” turns into “sea levels go up”

One or two swaps like that would not bother me much. In bulk, the whole thing feels flattened and weird.

On top of that, every sample had small punctuation mistakes. Commas missing, odd line breaks, awkward flow. It also ignored em dashes completely, so if you need those replaced or adapted for detectors, it does nothing about them.

Free tier details from my test:

• 3 uses before it asks for an account

• 200 words per run on free

• Inputs on free tier may be used to train Writesonic’s models

So anything you paste there on free runs might end up in their training data.

After all that, I went back and ran the same base text through Clever AI Humanizer. That one sounded more like something a person would write, and it did not cost me anything to run.

I had a similar experience with Writesonic’s humanizer. It cleans things up a bit, but it feels more like a light paraphraser than a tool that makes content sound “human” in any real sense.

A few points based on your concerns.

- Readability vs superficial rephrasing

Writesonic tends to

• Shorten sentences even when the original flow is fine

• Swap precise terms for informal phrases

– “droughts” → “long dry spells”

– “carbon capture” → “grabbing carbon from the air”

This might look casual, but you lose nuance and topic authority. For SEO and E‑E‑A‑T, that is not great. If your niche needs accuracy or expertise, this style hurts more than it helps.

- SEO and originality

From what I tested, it does not add new angles, opinions, or structure. It mostly rewrites at sentence level. That means:

• Low gain in topical depth

• Thin improvement in “helpfulness” for users

• Higher chance content looks generic, even if detectors say “human” or “AI”

Search engines focus on usefulness, not AI detector scores. If the tool flattens your tone and removes specifics, you lose differentiation against competitors.

- AI detection

I saw the same inconsistency as @mikeappsreviewer, but with different tools. Some outputs passed one detector and failed another. That tells you these tools are noisy. Using Writesonic only to chase “0 percent AI” flags is a risky goal. It keeps you chasing detector quirks instead of improving content.

My rough takeaway on detection:

• Do not rely on one detector

• Do not overfit your workflow to GPTZero, ZeroGPT, etc

• Focus on human signals: depth, examples, personal insights, internal data, experience

- Writing quality

I’d score it slightly lower than @mikeappsreviewer did. Around 4.5 to 5 out of 10 for anything expert‑level. Above average for simple blog fluff, but weak for:

• Technical niches

• Medical or legal content

• Long guides that need structure and argument

Common issues I saw:

• Comma placement off

• Awkward transitions between sentences

• No real improvement in paragraph structure

If you care about brand voice, you will still need to edit heavily.

-

Data and privacy

On the free tier, Writesonic uses your inputs for training. If you paste client work, proprietary data, or unpublished research, that is risky. For agency or enterprise use, that is a big red flag. -

What I would do instead

If your goal is:

• More natural tone

• Better detection results

• Less flattening of technical terms

You have a few options.

a) Use a better humanizer as a helper, not a crutch

When I fed the same base content to Clever Ai Humanizer, the output kept more nuance and sounded closer to how I would write. It did not turn “rising sea levels” into “sea levels go up” everywhere.

I would treat Clever Ai Humanizer like this:

• Draft your piece with whatever AI writer you like

• Run specific sections that feel stiff through Clever Ai Humanizer

• Keep your own voice on intros, conclusions, and key arguments

• Add real examples and experience after that

If you want a quick overview of how it behaves, this helps:

see how Clever Ai Humanizer handles real content examples

b) Mix AI with manual edits

For SEO and originality:

• Keep technical wording where it matters

• Add your own short stories, mini case studies, or failures you have seen

• Answer search intent fully, not only surface questions

• Use headings that match how users think, not how AI groups topics

c) Stop chasing “0 percent AI” as the main metric

Your goals should be:

• No obvious AI patterns like repetitive phrases or generic claims

• Clear point of view

• Unique data, stats, or processes when possible

Detectors change. Search guidelines change. Human‑level value stays stable.

- When Writesonic is “good enough”

I would only use Writesonic’s humanizer if:

• You write simple list posts or short summaries

• You do not mind losing some precision

• You already plan to do heavy manual editing for tone and structure

For anything where your name or brand reputation matters, it feels like an overpriced extra feature, not a core tool.

If your main worry is SEO and originality, I would move your humanizing layer to something like Clever Ai Humanizer, then spend more time adding your own expertise on top, instead of paying Writesonic to oversimplify your content.

Same experience here with Writesonic’s humanizer: it “does stuff” to the text, but it does not really make it feel more human, and it definitely does not fix SEO problems by itself.

Where I slightly disagree with @mikeappsreviewer and @boswandelaar is on one point: I do not think the core issue is detectors at all. GPTZero, ZeroGPT, whatever, are noisy and easy to confuse. Chasing 0 percent AI is a trap. I would treat their scores as a loose warning signal at best, not a target.

What really matters for you:

- Readability vs shallow paraphrasing

Writesonic mostly:

- Shortens sentences even when longer ones are fine

- Replaces specific terms with kiddie-level wording

- Leaves structure, argument and formatting almost untouched

So your article “feels different” but not more expert, not more trustworthy, and often less on-brand. That is bad for engagement metrics and for any niche where you need authority.

- SEO and originality concerns

For search, you want:

- Unique angles, real examples, specific insights

- Strong headings that match search intent

- Clear topical depth and internal linking opportunities

Writesonic’s humanizer acts like a surface paraphraser. It usually does not add any new value that would help you stand out in a competitive SERP. If anything, it can strip out technical precision and make your content look like every other generic AI blog.

- How I’d actually use tools in your situation

What I’d do instead of relying on Writesonic to “fix” AI content:

- Keep your original AI draft for structure

- Manually edit key sections where your experience matters

- Use a humanizer only on stiff or over-robotic paragraphs, not the whole article

- Add personal details, mini case studies, or opinions that no tool can invent accurately

This is where Clever Ai Humanizer makes more sense in your stack. It tends to preserve nuance a bit better, so phrases like “rising sea levels” do not always get turned into “sea levels go up” everywhere. If you want a quick feel for how it behaves, check out this in depth Clever Ai Humanizer walkthrough and compare it against what you are seeing from Writesonic.

Short version of a solid content workflow for you:

- Draft with any AI

- Humanize selectively with something like Clever Ai Humanizer

- Layer on your own experience, data and tone

- Ignore “perfect” detector scores and watch engagement and rankings instead

If your main fear is SEO penalties or “AI detection,” Writesonic’s humanizer is not solving that. Real originality comes from your expertise and structure, not from swapping “droughts” with “long dry spells.”